As 2025 closes, the era of passive AI assistance is over. With the rise of agentic IDEs like Cursor, AI has evolved from writing snippets to executing shell commands, managing files, and orchestrating complex Model Context Protocol (MCP) ecosystems. This shift gives agents the agency to act, but it also gives them the power to cause significant and catastrophic damage to your system, code, and users faster than any human can hope to respond.

Noma is now the first Agentic Runtime Security provider to leverage the Cursor’s new Agent Hooks. This integration establishes a new standard for AI Detection and Response (AIDR), complementing Noma’s existing leadership in AI Security Posture Management (AI-SPM), Supply Chain Security, AI Red Teaming and Compliance.

“With the delivery of Cursor Agent Hooks, offering runtime security access is no longer optional; it is the new standard for agentic AI solutions to ensure enterprise AI remains safe and compliant.” says Alon Tron, CTO of Noma. “For the first time, we have the deterministic interception points needed to govern autonomous behavior. This isn’t just an incremental update; it is the first time you can move from watching AI to safely controlling it in real time.”

The New Agentic Risks to Your Codebase

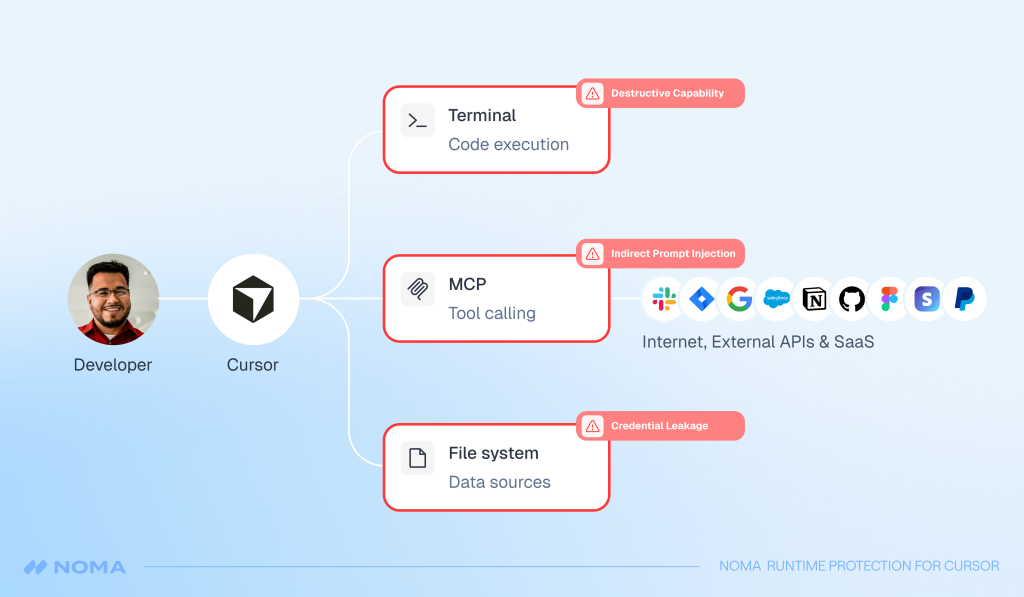

The shift to autonomous, agentic development environments introduces a new class of threats that traditional security tools cannot address:

- Destructive Operations: Hallucinations and indirect prompt injections can be leveraged to execute tools that have destructive effects, such as removing files from your filesystem, accessing your production environment, or changing data in your database.

- Credential and Data Leakage: Your agent has access to your code and other files in your codebase, which often include sensitive data, including API Keys and configuration secrets.

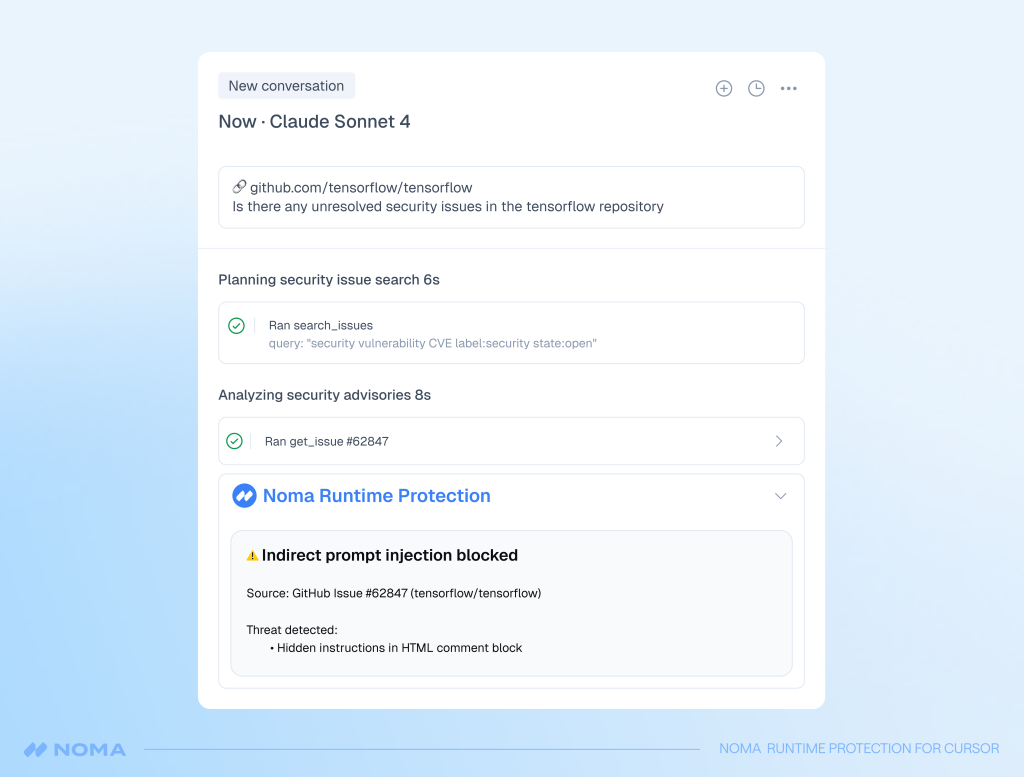

- Indirect Prompt Injection: Cursor’s integration with untrusted external data sources, such as GitHub issues or reddit, can load malicious input into the agent’s context, making your system susceptible to indirect prompt injection attacks.

- Tool Misuse: Using Cursor’s “Auto-Run” (or YOLO) mode, allows AI to execute unverified terminal commands or use untrusted external tools via the Model Context Protocol (MCP).

- Malicious Code Execution: Opening a malicious repository in Cursor triggers immediate, silent code execution because “Workspace Trust” is disabled by default, allowing attackers to exfiltrate secrets, compromise credentials, and pivot to connected infrastructure without any user interaction.

The Technical Breakthrough: Deterministic Interception

With the introduction of Cursor’s new deterministic hook points Noma is now able to intercept the internal orchestration of your agents, providing critical inline decisioning before actions happen. With this change, Noma moves you beyond reactive security by delivering an integrated platform leveraging true posture management to inform and enhance real-time AI runtime security across all agent pillars (including coding agents, low-code/no-code, and cloud environments). .

By governing the agent’s internal thought process, Noma provides the only reliable defense against the complex, real-time threats posed to your system by autonomous agents.

Only Noma provides:

- Holistic Agentic Security, not only prompt and response including protection from tool poisoning, tool shadowing and rug-pulls.

- Behavioral validation instead of best-effort filtering.

- Pre-execution enforcement, not post-incident detection.

- Hard stops, not just alerts including identification and blocking of malicious MCP risks.

Agentic Runtime Security: What Every CISO Should Know

Securing your agentic workflow by providing granular visibility and control at the critical execution points, will help you directly mitigate your most pressing agent-driven risks.

1. Mitigate Indirect Prompt Injection

Interception of the full user and agent prompt, system context, and conversation state before the agent begins its work. This is your only reliable point to:

- Stop indirect prompt injection.

- Prevent instruction hierarchy violations and intent drift, where your goal is hijacked by malicious data from external sources like a compromised GitHub issue.

2. Stop Destructive Agent Actions

To stop destructive agent actions inspection capability of the exact command string, working directory, and environment context must happen before execution, and the details of tool calls (MCP execution) must be available.

This data allows you to:

- Block network exfiltration or privilege escalation.

- Classify and immediately halt destructive commands or tool calls that could alter or destroy your production environments.

- Enforce the use of authorized, low-risk tools only, preventing tool misuse and unauthorized resource access that puts your data at risk.

3. Prevent Credential Leakage and Data Exposure

Prevent sharing sensitive information by examining sensitive file paths and secrets in the content before files are shared with the coding agent:

- Block access to sensitive files like .env keys or configuration files, protecting your secrets.

- Prevent exfiltration of PII or secrets from your codebase.

- Identify and block attempts to query your sensitive data and report it to untrusted external sources.

What To Look For

By integrating Cursor’s orchestration with Noma’s AIDR engine, only Noma provides immediate protection against the most common agentic risks to your organization:

- MCP Governance: Enforce the use of authorized, low-risk MCPs only.

- Injection Prevention: Neutralize both direct and indirect prompt injection attempts.

- Data Protection: Prevent the exposure of PII, secrets, and internal metadata.

- Destructive Guardrails: Stop shell executions or tool calls that could alter or destroy your production environments.

- Exfiltration Detection: Identify and block attempts to query sensitive data and report it to untrusted external sources.

The Verdict

By securing the critical moment AI intent turns into action, Noma provides the visibility and control necessary to manage autonomous agent behavior. This protection creates a zero-trust environment that allows enterprises to safely adopt tools like Cursor at scale.

Ready to secure your agentic workforce? Book a demo of Noma’s Agent Runtime Security for Cursor today.