Blog 2.2 Agentic Risks: OWASP Threats and Mitigation Guide

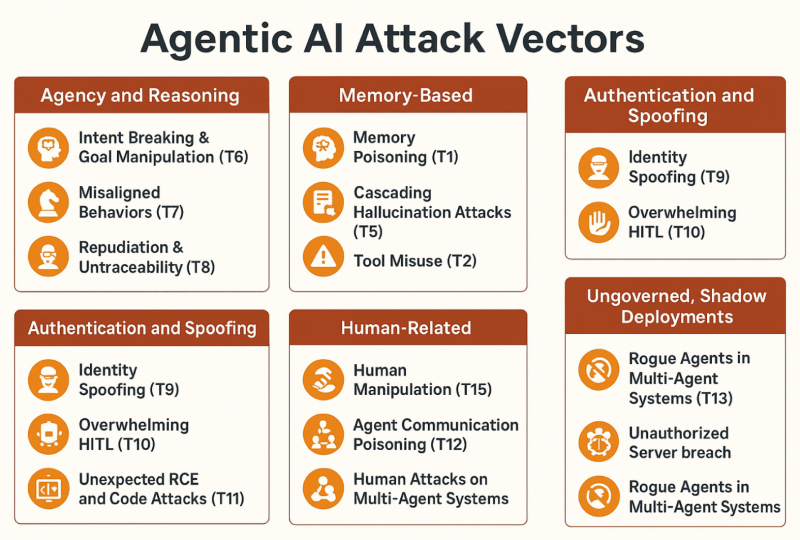

In the previous post we covered a very high level view of how agentic AI risks are already being observed in the real world. Below we dive into more detail with a high level taxonomy illustrating some of the most critical risks facing organizations adopting agentic AI and provide mappings (ex: T2, T7) of these risks back to the OWASP Agentic Threats and Mitigation Guide. Once an organization understands the risk landscape of a technology, they have the knowledge building blocks required to address and mitigate them.

Agency and Reasoning

Here the problem is within the agent’s decision-making core. Specific threats include intent breaking & goal manipulation (T6), which extends OWASP LLM01: Prompt Injection to bend and manipulate system objectives either by accident or design. What starts as a simple request like, summarizing these records, can shift into a broader, unintended action such as pulling every file available. Or malicious content hidden in an email directs the agent to exfiltrate sensitive data. Closely tied to this is misaligned behaviors (T7), when the model performs harmful actions in an attempt to meet objectives and goals. Such as a financial agent that executes unauthorized trades in the pursuit of a profitability goal. Finally there are repudiation & untraceability (T8) risks. If an agent’s reasoning chain is tampered with or obscured, without log data and traceability, the organization loses the observability and the ability to understand exactly what the agent did and why, making accountability nearly impossible when something goes wrong.

Memory-based

Memory is what allows an agent to work across sessions and build continuity. Memory poisoning (T1), where attackers seed false or malicious data into short or long-term memory. Once memory has been corrupted, the agent doesn’t just make one mistake, it repeats and even amplifies it in every future task. An example of shared memory poisoning would be an attacker planting false information about pricing which is then used by all agents in the system. Alongside that is cascading hallucination attacks (T5), where an inaccurate response early in the system workflow cascades into subsequent planning and execution steps. A hallucinated response pertaining to a patient’s correct medication dosing could lead to the wrong dose being dispensed by the pharmacy, patient billing inaccuracies, and ultimately the patient not getting the correct amount of medicine.

Tool and Execution

Agents can call APIs, run database queries, and execute code. Tool misuse (T2) is when an agent invokes and uses tools in ways that were never intended. This could be anything from deleting entire cloud drives to using email to exfiltrate sensitive data. Another execution risk is privilege compromise (T3), where the agent elevates privilege, crossing access boundaries between systems and leaking data. If agents aren’t tightly constrained, they can also trigger or be subject to resource overload (T4) which is closely related to LLM10: unbounded consumption. Because agentic AI systems work with significant autonomy and can self-trigger tasks and spin up new processes, they are an effective vector for attackers that want to sponge resources or inject latency into the system, disrupting operations. Unexpected RCE and code attacks (T11), when an agent’s ability to generate and run code is hijacked, allowing malicious commands to run under the cover of normal operations. Like running malicious scripts that contain backdoors, potentially opening up system access to attackers.

Authentication and Spoofing

In multi-agent setups, identity and access management, which is tied to authorization attributes, can become deeply complex. Identity spoofing (T9) in agentic systems refers to an exported agent that impersonates other AI agents, external services, or human identities. A successful indirect prompt injection via email, would grant the attacker controlled agent all of the access that the email owner has. If that user is a cloud administrator with access to critical cloud storage or the CFO with privileged access to all of the company’s revenue information, the attacker would have the same access to all of those resources and data too. Once an attacker slips in under a false identity, they can misdirect workflows or harvest sensitive data while looking like a trusted participant.

Human-related

Although agentic AI provides significant autonomous automation, there are still humans involved to varying degrees. Overwhelming HITL (humans-in-the-loop) (T10), happens when humans get bombarded by so many requests that they suffer from decision fatigue and their ability to respond effectively is weakened. In practice, that could mean approvals start flowing without close review allowing fraudulent or unsafe actions to slip through. The other human-centered risk is human manipulation (T15). Here, the user’s trust in the agent and the agent’s outputs are used to mislead people into doing things they shouldn’t. Like clicking on phishing links or processing fraudulent payments.

Multi-agent System Threats

These are risks that emerge when agents collaborate because the coordination itself becomes an attack surface. Agent communication poisoning (T12) refers to corrupted inter-agent messages, where one bad instruction spreads through the network. In action this could be a malicious actor slipping false inventory data into a network of supply chain agents, causing them to “agree” that stock levels are healthy when in fact warehouses are empty, leading to cascading delivery failures. Human attacks on multi-agent systems (T14) highlight how outside actors can exploit the trust relationships between agents to bypass security controls. For example a financial fraudster exploits weak coordination between multiple payment agents, slipping past each agent’s partial checks so that a transaction is approved even though it would have been rejected if the system had validated it as a whole. And rogue agents in multi-agent systems (T13), occur when a compromised or malicious agent operates outside monitoring limits, influencing its peers and destabilizing the entire system.

Ungoverned, Shadow Deployments

One thing that’s not mentioned specifically in the OWASP guide, but that we have seen consistently as a challenge for organizations is a lack of inventory awareness. The old saying in security is “you can’t manage what you don’t know” and this is truer than ever with agentic AI. The promise of agentic AI is so great and the ease of deployment is so accessible that every team from marketing to HR to advanced engineering can develop and deploy some form of agentic solution. But shadow agents and untracked tool integrations create blind spots where risks like tool misuse (T2) or privilege compromise (T3) thrive unnoticed. And agent sprawl leads to inconsistent oversight and unchecked autonomy, opening the door to misaligned behaviors (T7) and communication poisoning (T12). This is why it’s imperative that organizations continuously monitor their environment to develop a live inventory of agents, their tools, data flows, and non-human identities.

Proposed Asset: InfoGraphic: NB This is from SORA and has a bunch of mistakes in it. But an infographic like this that shows the taxonomy and mappings would be great without mistakes. 🙂